People and ideas behind the glasses of the future: research stories from the Smart Eyewear Lab

Glasses: everyday objects in common use, but also protagonists of a technological revolution that involves various fields of research, such as photonics, electronics, artificial intelligence, and many others. The Smart Eyewear Lab, established through the collaboration between Politecnico di Milano and EssilorLuxottica, is at the heart of this transformation: here, around one hundred researchers tackle every day the challenges that separate traditional glasses from smart devices capable of enriching our experience of the world.

Behind an apparently simple frame lies a complex ecosystem of innovation: miniaturized sensors, artificial intelligence algorithms, eye tracking technologies, and holographic displays. At the intersection of different research streams, including Eye Tracking, Camera and Sensing, and Optical Integration, researchers work side by side to integrate hardware and software into a very small space, without compromising design and comfort.

The glasses of the future will no longer be just accessories, but will shape new ways of perceiving, analyzing, and interacting with reality. They will be true digital assistants, capable of understanding context, monitoring health, improving communication, and projecting information directly in front of our eyes.

In this second journey inside the Smart Eyewear Lab (you can find the first story here), we were guided by some of the most promising researchers and the most experienced professors of Politecnico, who told us how, among circuits, mathematical models, and special lenses, the future of smart glasses is being built. This work, carried out in synergy with EssilorLuxottica scientists, requires cross-disciplinary skills and a shared vision, where every design choice—from the shape of a lens to the position of a sensor—has direct implications for the end-user experience.

Eye tracking

Eye tracking makes it possible to trace eye movements and recognize the point of interest observed by the user. To explore this key technology of smart glasses and the research challenges it entails, we met two researchers from the Department of Electronics, Information and Bioengineering at Politecnico di Milano.

Marco Carminati is a professor of Electronics at Politecnico di Milano and project manager of the Eye Tracking stream for the university. He has long been involved in sensing and microelectronics for light sensors, with a particular focus on optoelectronics. Alongside him, Marco Paracchini, a young researcher in Telecommunications, carries forward experimentation and the development of new technologies for eye tracking.

What does the Eye Tracking stream focus on?

MC: The Eye Tracking stream brings together different skills and technologies, all oriented toward a common goal: developing systems capable of following and tracking eye movements. In smart glasses, this function has a dual purpose: on the one hand, it allows the human–machine interface to be guided by gaze; on the other, it provides valuable information enabling the estimation of a person’s health and psychophysical well-being, such as levels of fatigue, concentration, and attention. Around thirty Politecnico researchers are part of this group, working to find solutions that are precise but also low in energy consumption, a fundamental requirement for the wearable devices of the future.

What are the main technologies you are developing?

MC: Currently, the most widespread solutions are based on infrared microcameras integrated into the frame, but these require a lot of energy and computing power. For this reason, we are exploring various alternatives such as neuromorphic cameras that mimic the functioning of the human eye, or even simpler systems that use single photodetectors, built using different techniques, to infer eye position. One of the main streams working on this latter technology is led by Marco Paracchini.

Marco Paracchini, tell us about your project

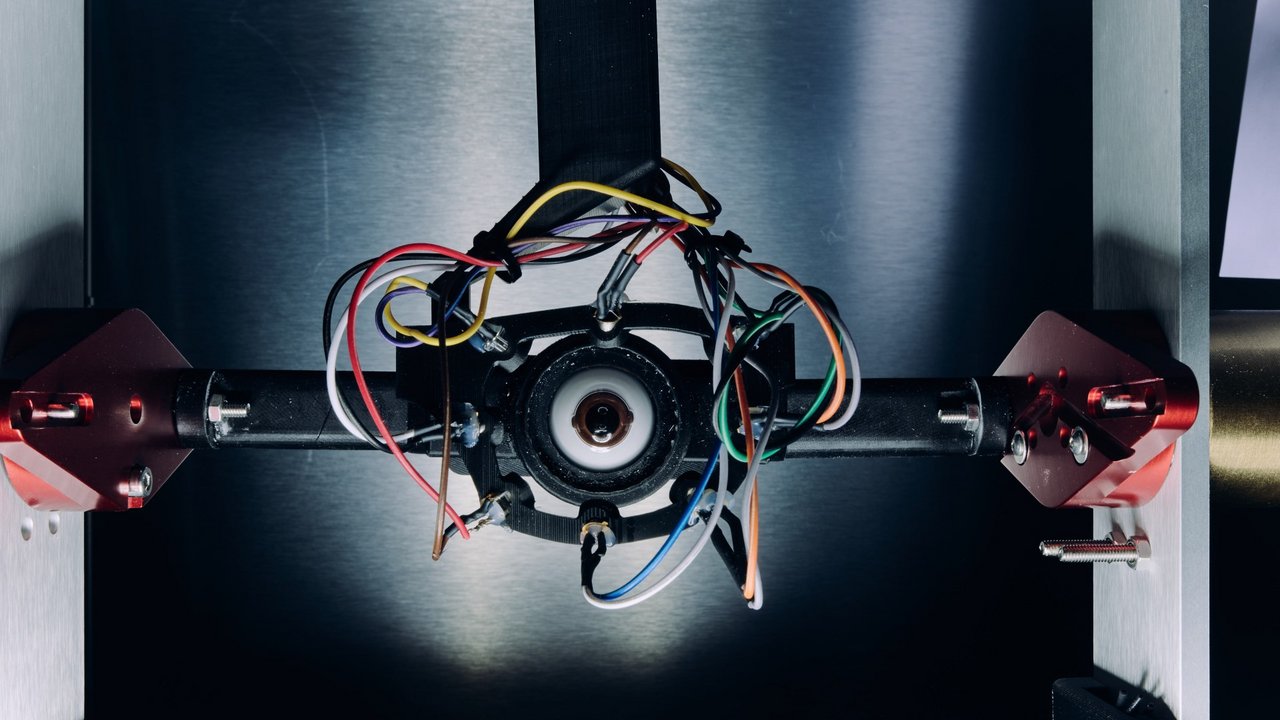

MP: We are working on a technology that uses a set of LEDs and photodiodes mounted on the glasses. The LEDs illuminate the eye and, based on the signals collected by the photodiodes, we can infer the gaze position. One of the main challenges is robustness: facial structure varies from person to person, and this affects the acquired signals. This is a known technology, but at the Smart Eyewear Lab we are studying further developments. To make the system more adaptable, we are carrying out various tests both on mechanical systems, such as a gyroscope that moves a synthetic eye, and on robotic systems for moving artificial eyes.

What other technological challenges are you addressing?

MP: A critical aspect is “slippage,” that is, the slight displacement of the glasses from their optimal position. This can alter the collected data, so our algorithms must be able to adapt in real time. Moreover, the entire system must operate on very compact, ultra-low-power chips that can be integrated directly into the frame. We already have prototypes in this regard.

MC: Optimizing algorithms for low-power platforms is one of the most innovative challenges. Traditional systems used in sensing rely on computers or smartphones, which use fairly powerful processors. We seek the right compromise between simplicity and accuracy, using statistical and machine learning methods to reduce computational load. To conduct research on this optimization, we work on three levels: numerical simulation, testing on artificial eyes, and validation in real contexts.

What applications can eye tracking have in smart glasses?

MC: It is often said, somewhat poetically, that the eye is the mirror of the soul; for scientific research this is partly true: we can say that the eye is the mirror of the brain. Eye movements reflect health status, concentration, and fatigue. Continuous tracking, made possible by smart glasses, could in the future provide useful tools also in the medical field, for example for screening or monitoring certain conditions.

Another important application for us is certainly the improvement of the human–machine interface: eye tracking allows us to trace where the user is focusing their attention, for example on an augmented reality display projecting information onto a lens. Put simply, it means using the eyes instead of a mouse.

Here lies the challenging aspect of the research, because eye tracking systems, mostly used in ergonomics research and marketing studies, analyze two-dimensional scenes; moreover, in these cases devices are worn by the user for a limited time and for a specific purpose. The real challenge we are addressing at the Smart Eyewear Lab is to make this technology not only adaptable to a three-dimensional scene, but also transparent and wearable all day, becoming part of people’s everyday lives.

MP: The range of eye tracking applications is broad; the goal of our stream is not to focus on one or more of them, but to develop enabling technological solutions. These technologies could, for example, allow taking photos that capture not the entire scene, but only the part on which the user is focusing; or they could be useful for driving safety, monitoring that the gaze is directed at the road. Eye tracking can also make devices more accessible to people with disabilities, allowing, for example, composing sentences or selecting objects on a screen simply with the gaze.

What is the current stage of research compared to products already on the market?

MC: Eye tracking is already a mature technology, but the technologies we are developing will likely be part of a future generation of smart glasses and are not yet present in currently available products. There is certainly a great deal of interest in the industrial world in transforming glasses into a technological device capable of enhancing human functions. This attention could accelerate the transition from laboratory to product.

In this sense, a research center such as the Smart Eyewear Lab is strategic, because it combines the experience in materials, engineering, and manufacturing of a leading company in the sector such as EssilorLuxottica with the expertise in electronics, computer science, and photonics of a major university such as Politecnico di Milano.

Tell us what it is like to work at the Smart Eyewear Lab

MP: In the lab there is a lot of cross-contamination between different research fields: from hardware and electronics to software and algorithms. I have found myself dealing with a wide variety of things: setting up mechanical systems, acquiring and analyzing data, developing algorithms. It is a 360-degree activity that has allowed me, and still allows me, to grow a lot.

MC: The Smart Eyewear Lab is a true meeting point between university and industry, but also between different research groups within Politecnico. Here, students, PhD candidates, and researchers with different backgrounds meet every day to collaborate, united by a common and at the same time ambitious goal. It is a young, dynamic environment rich in cross-fertilization; I think this aspect is of enormous value.

Camera and sensors

To transform glasses into devices capable of perceiving and interpreting the surrounding world, the Smart Eyewear Lab has dedicated a research stream to the integration of cameras and sensors into glasses. Two researchers from Politecnico di Milano, Andrea Giudici, an electronic engineer, and Simone Mentasti, a computer scientist, tell us about the results achieved and the technological challenges to be addressed in the field of microelectronics and artificial intelligence.

Tell us about your background

SM: I studied computer science and then completed a multidisciplinary PhD involving mechanics and computer science. The PhD project, funded by Regione Lombardia, aimed at developing an autonomous vehicle. I worked on sensing and data acquisition, which are fundamental for recognizing vehicles, pedestrians, and road signs. After the PhD, I continued doing research in the AIRLab of Politecnico di Milano, where I focus on sensor data analysis, especially in robotics.

When the collaboration agreement between Politecnico and EssilorLuxottica was established, it was natural for me to contribute to the Smart Eyewear Lab. For smart glasses, in fact, the analysis of sensory data is a fundamental tool to understand what the user is doing and what is happening in the surrounding environment.

AG: I studied electronic engineering at Politecnico and continued with a PhD, during which I worked on two projects. One with NASA, where I developed integrated circuits for satellite Lidar applications; the goal was to perform a stratigraphic analysis of the atmosphere and the first meters of the ocean, identifying phytoplankton areas, photosynthesizing microorganisms at the base of the marine food chain. The second project was with HFSP (Human Frontier Science Program), whose aim was to observe and study the dynamic 3D structure at atomic scale of a protein while it performs its biological function and how it changes over time. I also actively participated in the creation and development of a startup in the automotive sector, working on the design, prototyping, and industrialization of electronic products.

For me as well, joining the Smart Eyewear Lab was natural: after finishing the PhD, I was looking for a new challenge and immediately seized the opportunity.

What do you work on in the Camera and Sensors stream?

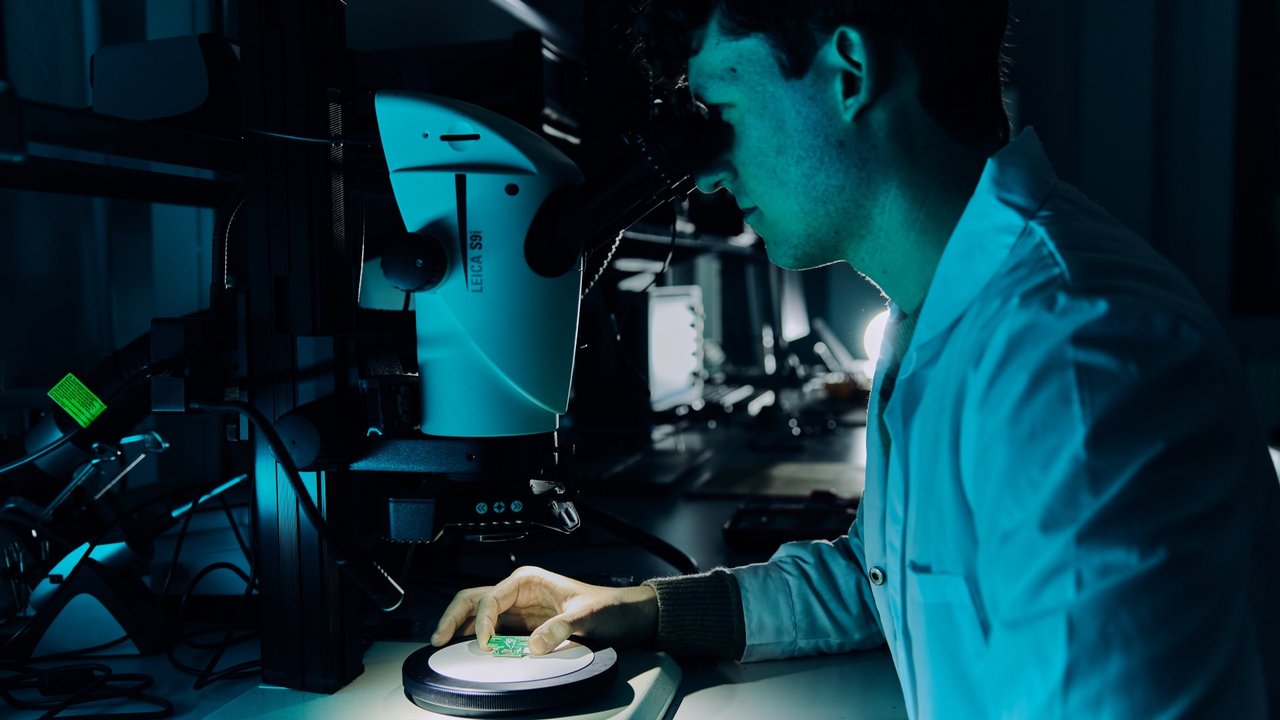

AG: My group, composed of researchers and students, focuses on hardware development: we design and build the electronics to be integrated into the glasses, taking into account very strict constraints. The glasses are small, must be comfortable and aesthetically pleasing; therefore, every electronic component must perfectly fit the geometry and design of the frame. We work closely with EssilorLuxottica, which also supports us in the mechanical aspects and provides guidelines for ergonomics.

Once the components are selected and positioned, our work does not stop there: we must test and program the electronics. We write the firmware, that is, the code that allows the processing unit to communicate with all peripherals: cameras, sensors, microphones, accelerometers. The challenge is significant because the battery is small and space is limited to accommodate, for example, all the electronics with the bulk of traditional components.

SM: My group works on artificial intelligence algorithms that analyze data from sensors. The glasses must be “intelligent”: understanding what the user is doing, where they are, and what is happening around them. The problem is that we cannot use the same complex AI models that run on a server, because the microcontrollers in the glasses have different power, memory, and energy constraints. Our research aims to develop simple but effective algorithms, capable of interpreting images and signals in real time, directly on the glasses.

What information can smart glasses provide?

SM: A concrete example: glasses can recognize that the user is in a meeting or driving and deactivate notifications to avoid distractions. To do this, AI models mainly analyze images captured by integrated cameras, because they are rich in information. However, processing images is computationally expensive, so we often also integrate data from other sensors, such as microphones and accelerometers, to reduce the load.

Placing cameras and sensors inside glasses has greater advantages compared to placing them, for example, in a watch. If we want to understand what the user is doing and their context, the camera on the glasses is much more functional: it adopts the same point of view as the eye and “sees” exactly what the user sees.

How will the future generation of smart glasses differ from existing products?

AG: The major challenge is to ensure that all processing takes place directly on the glasses, without sending data to a smartphone or an external server. This is important both for privacy (for example, GDPR prohibits sending images of people to external servers) and for energy efficiency. We are also studying new sensors, always with the aim of reducing consumption and optimizing data processing.

How do you think your stream will evolve in the coming years?

AG: Algorithms evolve rapidly, but hardware development is slower. New neural accelerators are now emerging that emulate the functioning of the brain and promise lower consumption. This is a fundamental aspect considering that the battery of smart glasses is about twenty times smaller than that of a smartphone.

The market is very attentive to these products and investments are growing. Several startups are working on new neural accelerators, and this could further foster innovation.

What have been the most significant moments of your research?

AG: In my research path I have had many satisfactions, because I have faced challenges I had never encountered before. Every time a new electronic board works, it is a great joy for us, because behind it there is work lasting months or even years. Seeing the first video images captured by our device was exciting, because in those moments we saw all the single pieces we had worked on integrate perfectly and function together.

The most beautiful thing is knowing that everything was born in this lab: from the idea of how to make the hardware work to the firmware up to the final model.

SM: For me as well, there have been many satisfactions as a researcher. In these three years of work at the Smart Eyewear Lab we have written publications, participated in international conferences, and organized workshops. On these occasions we have seen our research work recognized at a global level.

What differentiates the research experience at the Smart Eyewear Lab from other collaborations?

SM: At Politecnico we have many collaborations with companies, and in my academic career I have often worked on industrial projects. At the Smart Eyewear Lab, however, the collaboration between university and company is daily and very close. In a joint lab, objectives are shared and long-term, and this creates a common vision among research groups.

AG: One of the most stimulating aspects is the collaboration among different research groups. Here the cross-contamination of skills is continuous: among electronic engineers, computer scientists, biomedical engineers, and many others. I think this is a fundamental and necessary asset when working on complex systems.

Optical integration

Among the most important challenges for the future generation of smart glasses is the creation of augmented reality using holography to project realistic three-dimensional images. The exploration of possible solutions in this cutting-edge and highly challenging technological field is entrusted to the Optical Integration stream. We met Marco Astarita and Alessandro Cerioni, PhD candidates at Politecnico di Milano, and Professor Gianluca Valentini from the Department of Physics, to hear about their research in a field where physics, engineering, and innovation intertwine every day.

Tell us about yourselves and how you arrived at the Smart Eyewear Lab

MA: I am originally from Naples and studied Physics at University Federico II. I approached holography during my thesis, working on applications for lithography. Three years ago, when the Smart Eyewear Lab was still an idea under construction, an acquaintance told me they were looking for people with expertise in holograms for augmented reality. I applied for the PhD call because it was the most ambitious and stimulating project I had found.

AC: I come from Jesi and studied Engineering Physics at Politecnico di Milano. I had won a PhD scholarship in Stockholm, but the day before signing the contract Professor Valentini told me about the Smart Eyewear Lab project. I decided to take the risk and came to Milan, even though it was not my main field because I had mainly worked on superconductors. Thanks also to Marco, I managed to fill the gap and now I work on holography applied to smart glasses.

What does the Optical Integration stream focus on?

MA: We focus on integrating augmented reality into glasses; that is, we must find a way to project images directly into the eye. On paper, holography is a very promising technology, because it allows creating truly three-dimensional images, respecting all the visual cues that the brain uses to perceive depth. However, digital holography, especially dynamic holography, is still a technology under refinement.

AC: Unlike other streams, we do not yet have a prototype of glasses with augmented reality: we work on a laboratory setup, reduced and placed on an optical table. Our task is to investigate and develop the technology, so that when components for creating integrable holographic displays become available, we will be ready. It is a bit like in quantum computing: technological foundations and advanced algorithms are developed while waiting for hardware to catch up.

Holograms are widely discussed, but the technology still seems far away.

MA: The word “hologram” is often used improperly. What we see at concerts or on some smartphones are not real holograms, but optical effects such as Pepper’s Ghost or stereoscopic displays. These technologies only give an appearance of three-dimensionality, but lack many properties that holography can offer. For example, most current displays use stereoscopy, creating slightly different images for each eye, but this can cause discomfort because the perception of three-dimensionality conflicts with the convergence of the eyeballs and the accommodation of the crystalline lens. Holography, on the other hand, solves this problem by restoring a natural perception of depth.

What makes holography so special from a technological point of view?

MA: The three-dimensionality of a hologram derives from many elements: color, focus at different depths, and above all the possibility of recreating the phase of light, not just its intensity. In practice, holography allows deceiving the brain into believing that light comes from a real object, not from a display. It is like the difference between looking at a photo of a window and looking through the window: with holography, when you change the viewing angle, the perspective of the image also changes, just as in reality.

GV: From a physical point of view, everything is based on controlling the phase of light. Lenses and our eyes work in this way: light passes through different points of the lens and reaches the focus at the same instant, that is, with the same phase. A three-dimensional object has different focal planes that our eye scans to perceive depth using the contraction of the crystalline lens. In three-dimensional films, quite popular some years ago, the eye was deceived with different images seen by the two eyes so as to give the impression that, for example, a character was approaching us almost to touch us. However, the focal plane was always that of the screen because the image did not control the phase of light. This is a concrete example of the misalignment between binocular vision, convergence, and accommodation mentioned earlier by Marco. Some subjects experienced nausea and disorientation because of this issue, which we absolutely want to avoid. Holography controls the phase of light to reconstruct three-dimensional images, whereas a traditional photograph cannot do so.

What are the main engineering challenges you face in the lab?

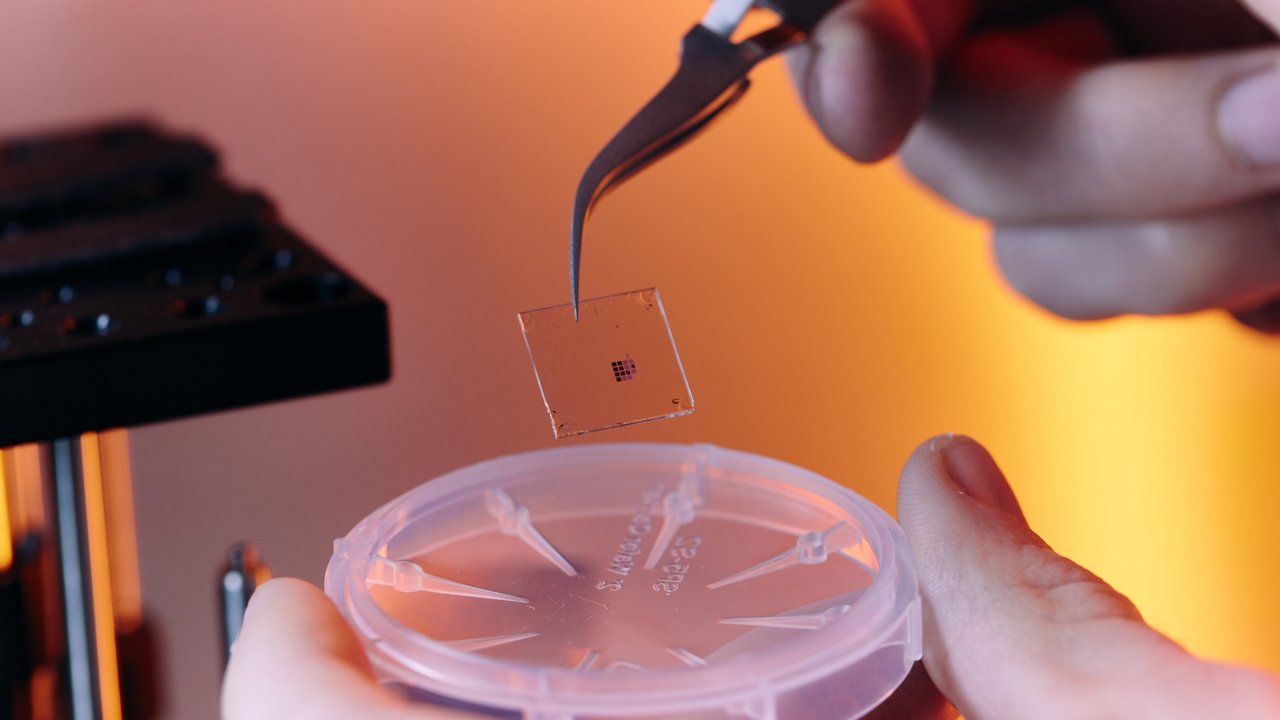

AC: One of the most important activities is reducing “noise” in holograms: this does not refer to sound, but to unwanted bright spots that can ruin image quality. We use spatial light modulators to provide phase information, but between the pixels there is always a portion of unmodulated light that must be eliminated. We have developed a proprietary solution that, like noise-canceling headphones do with sound, creates an “opposite” light wave to cancel the disturbance.

GV: The solution we have studied to reduce noise is innovative because, unlike others, it does not require additional hardware, as it uses the same system to generate both the useful signal and the cancellation signal. It is an important step forward toward the miniaturization and integration of these technologies into glasses.

MA: The noise problem is not trivial, because we start from a very intense laser beam that, if not properly modulated, could be very disturbing for the eye. Our solution distributes light in a safe and comfortable way, without compromising image quality.

What are the practical applications of your research?

MA: The key word is augmented reality. There is often confusion between virtual, mixed, and augmented reality. Mixed reality combines environmental context with digital images; the context we see is not exactly the real one, but an image captured by cameras and reprojected. Virtual reality is completely “artificial” and can only be seen using headsets.

Augmented reality, which we aim for, allows observing the real world through transparent lenses, adding digital information and computer-generated 3D images without altering, replacing, or digitally reacquiring the surrounding real environment. We can display directions, discreet notifications, or vital parameters measured by sensors integrated into the glasses themselves, while maintaining a natural view of the world. The goal is not to change reality, but to add useful content while leaving everything physical exactly as it is, ensuring natural interaction with the environment and above all with the people around us.

GV: From the beginning of the project, with EssilorLuxottica we have focused on developing technologies that could be integrated into normal glasses, with minimal compromises in terms of weight and size, consistent with their tradition of excellence in eyewear. For this reason, we do not want to create bulky headsets, but glasses that are worn every day, as attractive and comfortable as traditional ones, but with advanced digital functionalities. It is a challenge that combines basic and applied research, and that can truly change the way we experience technology.

Does the Optical Integration stream deal only with holography?

GV: A very current topic is metasurfaces, which represent the evolution of modern optics. These are extremely thin surfaces that can manipulate light much more efficiently than traditional lenses, making it possible to direct light exactly where it is needed, for example directly into the pupil. Metasurfaces are a central element in the development of smart eyewear because they could be fundamental for the interface between eye and lens.

How are academic and industrial skills integrated in this project?

GV: The Smart Eyewear Lab project represents a truly unique experience for Politecnico di Milano, both in terms of the mode of collaboration and its scale. The university is used to working with companies on many research projects, but with EssilorLuxottica we have taken a further step: we have created a true joint laboratory, hosted in a university building, where around one hundred researchers from Politecnico and the company work side by side every day. It is an unprecedented initiative in the Italian university landscape, supported by a long-term and highly valuable investment.

This collaboration has become a successful model for the relationship between university and industry. At the Smart Eyewear Lab, research is not limited to adapting existing technologies for specific purposes, but aims to create new technological foundations that can be used for the development of future smart eyewear. For Politecnico, it is an extraordinary opportunity to combine basic and applied research and to offer many young researchers the chance to grow professionally in a stimulating and innovative environment.